Overview

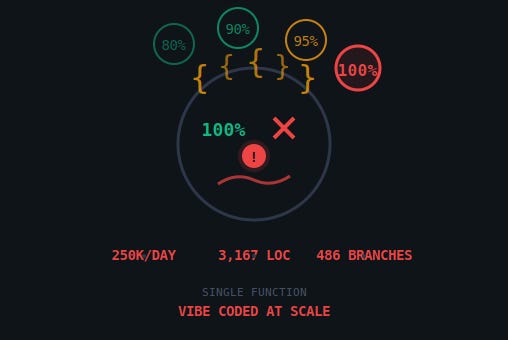

In late March 2026, a packaging error at Anthropic inadvertently exposed approximately 512,000 lines of Claude Code’s source code. While the leak itself was accidental, the content of the code sparked significant industry debate. The exposed source revealed architectural patterns consistent with unchecked AI-generated output: a single 3,167-line function with 486 branch points, monolithic files exceeding 46,000 lines, and regex-based sentiment detection inside a product built on one of the world’s most capable language models. A documented bug was reportedly burning 250,000 API calls daily and had been shipped regardless. The incident raises serious questions about the security and reliability of AI-written production systems, particularly those used in agentic software development pipelines.

Technical Analysis

The leaked print.ts file contained a single function housing logically distinct subsystems: the agent run loop, SIGINT handling, rate limiting, AWS authentication, MCP lifecycle management, plugin loading, team-lead polling via a while(true) loop, model switching, and turn interruption recovery. Security-relevant concerns include:

- Uncontrolled complexity: 12 levels of nesting and 486 branch points in one function make static analysis, fuzzing, and code review near-impossible at scale.

- Primitive input handling: Use of regex patterns like

/\b(wtf|shit|fuck|horrible|awful|terrible)\b/ifor sentiment classification bypasses the very LLM capabilities the product is built upon, suggesting inconsistent design discipline. - Known defect in production: A documented bug causing ~250,000 unnecessary API calls per day was shipped and left unresolved, indicating insufficient pre-release security and quality gates.

- Monolithic file sizes: Files of 25,000–46,000 lines resist meaningful human audit, creating blind spots for embedded logic errors or subtle malicious patterns.

The self-referential development model — Claude Code written by Claude Code — amplifies these risks. Without robust human-in-the-loop review, AI systems can propagate and entrench poor patterns across codebases at scale.

Framework Mapping

- AML.T0010 (ML Supply Chain Compromise): Enterprise users of Claude Code inherit opaque, AI-generated code of questionable integrity, expanding the attack surface for downstream compromise.

- AML.T0047 (ML-Enabled Product or Service): The product itself is the artefact — vulnerabilities in its codebase directly affect users relying on it for agentic coding tasks.

- LLM08 (Excessive Agency): Allowing an LLM to autonomously author 100% of production code, including its own development tooling, without sufficient human oversight is a textbook excessive agency scenario.

- LLM09 (Overreliance): Public claims of “100% AI-written” code without defined metrics encouraged uncritical trust in AI output quality, masking structural deficiencies.

- LLM05 (Supply Chain Vulnerabilities): Organisations integrating Claude Code into CI/CD pipelines inherit these architectural risks as a supply chain dependency.

Impact Assessment

Direct users of Claude Code — particularly enterprise engineering teams — are most exposed. Complex, untestable code increases the probability of undetected logic errors, security bypasses, and operational failures. The known API-waste bug suggests inadequate cost controls and monitoring. Broader industry impact includes erosion of confidence in “AI-written” software quality claims and potential regulatory scrutiny of unverified productivity statistics.

Mitigation & Recommendations

- Enforce mandatory human code review gates for AI-generated pull requests, regardless of claimed automation percentage.

- Apply static analysis and complexity thresholds (e.g., cyclomatic complexity limits) to AI output before merge.

- Treat AI-generated codebases as untrusted third-party dependencies requiring the same supply chain due diligence.

- Do not ship documented defects; implement defect-blocking CI policies.

- Avoid using primitive heuristics (regex, keyword lists) where the product’s core capability (LLM inference) is both available and more appropriate.

References

- Original article: https://techtrenches.dev/p/the-snake-that-ate-itself-what-claude